At this year’s Google I/O developer conference, CEO Sundar Pichai spoke of how augmented reality (AR) glasses embedded with Google’s real-time translation services could break down the language barrier in face-to-face communication. While not explicitly announcing any hardware, he did show a video with a pair of glasses with a heads-up display that would show the results of Google’s real-time translation technology as “subtitles for the world.”

Taking another run at the ill-fated Google Glass vision is a game-changer and speaks to the maturity and deep pockets of Google as a corporation. Taking lessons learned from Google Glass 1.0 the company has improved the technology to a point where it’s less interruptive (and doesn’t make you look like a cyborg) and ready for more widespread adoption.

We are tiptoeing into the post-computer world where “technology fades into the background” and allows us to push away the unnatural hardware interfaces and interruptive notifications from the human-to-human interaction and realize the true vision of AR – to augment the world around you.

Combine this “subtitles for the world” mentality to another Google Lens enhancement, Scene Exploration and now you have useful metadata from Google’s Knowledge Graph overlayed on the world around you. Check out the video below which jumps to the demo of how Google envisions you can use Scene Exploration to learn about the contents of items on the shelf at the grocery store.

Exciting times! Caveat is, as with all real-world technology, things will be rough in the beginning. I work in a Japanese company and sometime we turn on the real-time translation AI in Google Hangouts to see if we can get a decent translation of the meeting. Let me just say the results are not quite there yet. As Pichai said, there’s a lot of work to do.

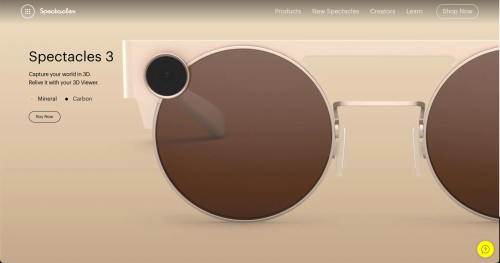

The competition has not stood still either. We also have Facebook’s Smart Glasses focused, as you would expect, on the capture and sharing features with a light that goes on to warn you if someone is filming. Snapchat’s Spectacles (pictured below) overlay 3D filters over what you look at thru their glasses bring the Snapchat Lens experience to the world around you, leave the psychedelics at home. The future is here, we just need to improve the software.