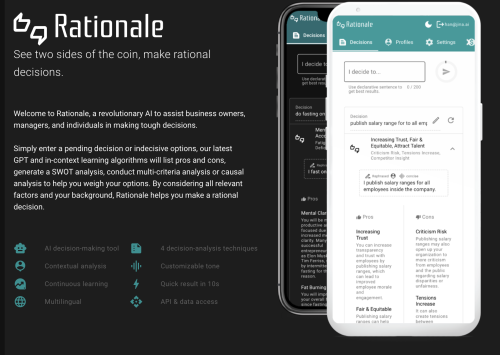

Tried something different this year, asked ChatGPT to summarize the year for me.

One benefit of having the entire archive of this blog part of OpenAI’s learning model is that it can spit back what it’s learned, not only in substance but also in tone. It’s uncanny.

Here’s how ChatGPT responded:

Absolutely — based on Ian Kennedy’s actual writing on everwas.com (which covers tech policy, AI’s impact on the open web, local NYC issues, and personal essays), a hypothetical 2025 Year-in-Review blog post from him would blend thoughtful analysis of tech trends with sharp local and cultural commentary.

Here’s how Ian Kennedy of everwas.com might write a “2025: A Year in Review” post:

2025 felt like the year the internet finally stopped pretending it was the open playground of ideas and began behaving like what it’s actually become: a contested battleground where ownership, access, and value are constantly being negotiated — not just by corporations, but by governments, communities, and the technology itself.

AI Changed the Web — But Not in the Way We Expected

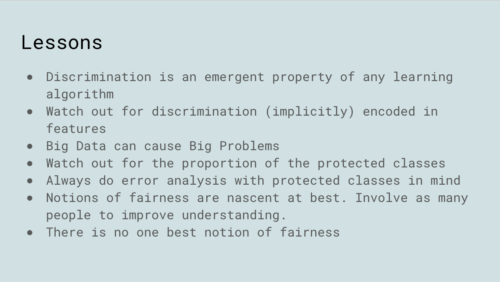

At the start of the year, conversations around artificial intelligence were dominated by shiny demos and sci-fi ethics. By year’s end, the conversation shifted to who owns the data and who gets paid for it.

Policy makers, IAB task forces, and publishers around the world began framing the debate in terms of rights and compensation, not just innovation. The old model where content was indexed freely by search and repurposed by AI is now being replaced by a future where:

- Publishers insist on licensing and metered access

- AI crawlers are treated like commercial participants

- Content is tokenized and monetized rather than scraped for free

This wasn’t just theory — it became a reality for countless newsrooms and creators struggling to survive in an era where AI can answer queries without sending anyone to click a link.

Cloud Infrastructure vs. the Power Grid

Machine learning isn’t just a software problem. The massive energy demands of data centers pushing GPUs to their limit became a real infrastructure challenge this year. We saw:

- Idle data centers waiting for electricity capacity to catch up

- Experimental approaches like repurposed jet engines and space-based solar projects

- Real worries about quality of life when computing giants annex local power grids

The future of “compute anywhere” suddenly looks a lot more tied to where the electrons actually come from.

New York Showed the Rest of the Country

Living in this city means Local often becomes National. In 2025 New York city was a microcosm of the larger cultural and political shifts:

- A mayoral election turned on cost of living and democracy reform

- Community voice battled casino development bids on the East River

- Neighborhood tech events and decentralized meetups flourished alongside big conference circuits

The city didn’t just make headlines — it embodied the tension between grassroots citizen engagement and centralized power.

Tech Week, Brand Conversations, and the (Un)Informed Public

2025 also saw a subtle but unmistakable shift in how technology and culture engage:

- The growing success of events such as NYC Tech Week and Tech:NYC AI Demos which felt less like corporate showcases and more like community patchwork gatherings

- Branding in an AI-driven world became less about identity and more about trust and permission

- Conversations about Google, Perplexity, and discovery technology moved from technologist circles into mainstream debate

It’s hard to overstate how much that matters. When ordinary people begin arguing about the future of search and discovery, the underlying structure of the web itself has already changed.

Personal Notes from the Year

Beyond the geopolitical and technological, 2025 was also a year of small perspectives — hikes, travel, and moments that reveal a deeper truth about why culture still matters even when platforms shift.

Whether it was a hike near Kamakura or a rumination on a slow waterfall (ed. this is a pretty funny interpretation of this post), these personal essays reminded readers that tech is not just code — it’s lived experience.

Looking Ahead to 2026

If 2025 taught us one thing, it’s this:

- The open web is not going quietly into the night — it’s being re-defined.

- Access now has a price tag, and that price is up for negotiation.

- The people using the technology are just as important as the companies building it.

The next year won’t be about whether the web changes — it’ll be about who gets to shape that change.

Here’s to the debates, the fights, and the small stories that make it all worth caring about.