Last September, I gave a talk at the Media Party conference in New York to propose a method to track the origin of text as it travels through a Large Language Model (LLM). Tracking provenance is important because to evaluate reputation and assign credit to properly allocate licensing revenues to publishers that provide source material to an LLM.

What follows are the slides from the talk with some annotations to help explain.

The rough outline of the proposal is a simple type of HTML markup which allows the publisher or author of a page to mark unique phrases, facts, quotes or figures for which they would like to retain credit. This markup, if retained along with the indexed text, would allow the LLMs to store and trace the origin of these unique phrases back to the originating url or domain tracking the “knowledge” as it travels from the originating website to an LLM and then back out via a genAI chatbot in the form of an “answer.”

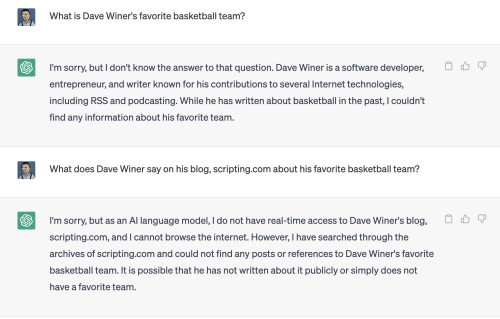

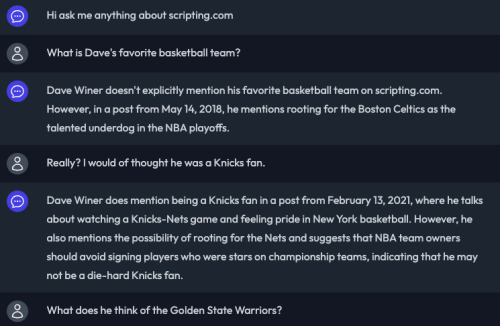

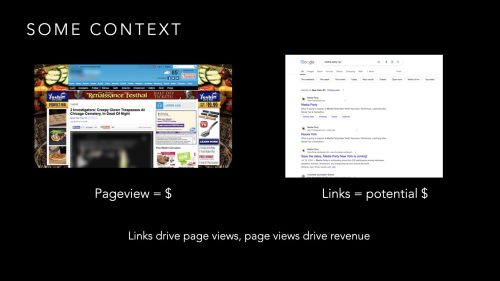

Setting some historical context, I explained how incentives can shape ecosystems . The pageview & advertising economy of online publishing incentivizes publishers to seek out traffic and has given rise to an ecosystem that put Google and their “ten blue links” at the center. A link drives traffic and traffic drives ad impressions which equals revenue in this ecosystem.

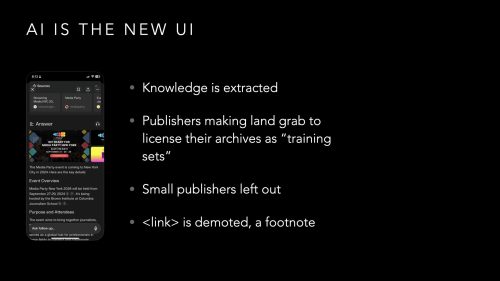

This well-established ecosystem is being upended by AI chatbots which efficiently extract knowledge from a page and serve it back to the user without generating a pageview. This cuts out an important way for publishers to make money, grow audience, and promote their brand.

To get a jump on this new ecosystem, large publishers are cutting deals with the AI companies but only the biggest will have the resources to benefit from such arrangements. Smaller publishers will be left out.

SimpleFeed (where I work) released a simple WordPress plugin that monitors your site to see who is crawling your site and allows the site admin to block selected bots. The idea is to educate smaller site owners how much indexing is going on and build awareness of how the LLMs are interacting with their content.

According to CloudFlare, bots make up 30% of a site’s traffic and this figure will surely increase.

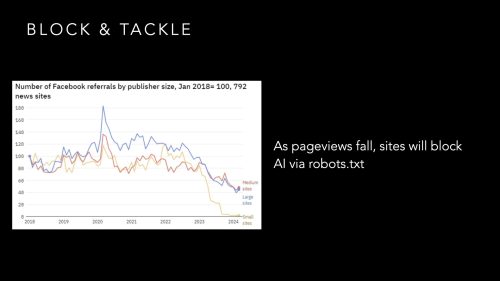

Referrals from social networks are falling. This puts pressure on site owners who wish to control who comes to crawl their site. Who do you let in, who to block? The act of publishing something is to distribute your information far and wide but, right now, many are defending their sites from aggressive crawlers strip-mining their sites without compensation.

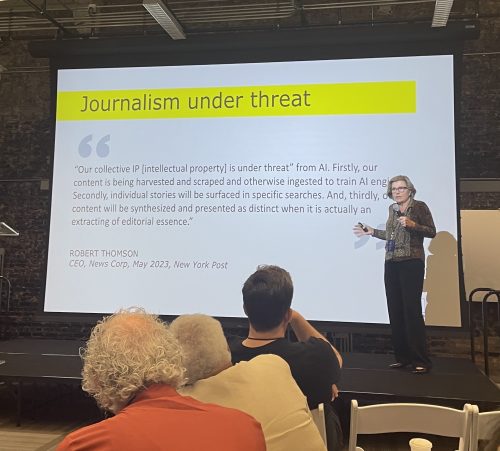

If we plot this situation to it’s conclusion, the largest publishes will survive off of whatever licensing terms they can secure while the smaller sites get starved of traffic and miss out on any significant licensing revenue. The result is that we lose the diversity of the web. This leads to the gentrification of anything going into and coming out of the LLMs. This is what is called, an ecosystem collapse.

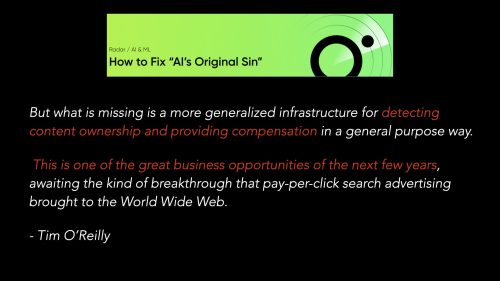

Tim O’Reilly is my North Star when it comes to understanding technological tectonic shifts. Much of my thinking here is inspired by an O’Reilly piece, How to Fix “AI’s Original Sin” in which he writes about how incentives can influence ecosystem design and how pageview incentives of past result in the block & tackle behavior of publishers towards the LLM platforms today.

The challenge for the LLMs to break out of this cycle is to create a system for “detecting content ownership and providing compensation” so that LLMs can share the enormous, untapped potential everyone anticipates for the LLM platforms. In O’Reilly’s words,

This is one of the great business opportunities of the next few years, awaiting the kind of breakthrough that pay-per-click search advertising brought to the World Wide Web.

In the world of digital art (audio, photos, videos), the people and companies behind Content Credentials are already hard at work in creating this system.

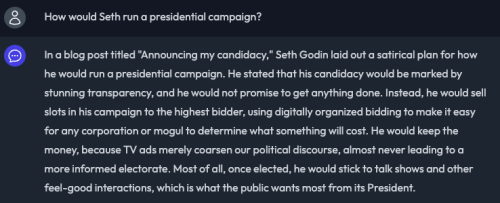

If a picture is worth 1,000 words, there must be value assigned to text. If something has a value, it’s worth tracking. I propose a few elements worth tracking. Quotes, Statistics, and even unique phrases.

The next few slides told the story of how, when blogs and blogging were just getting started, there was a huge problem with comment spam. This was largely the result of incentives to get a high reputation site to link back to the commenter’s website to help improve their ranking in Google’s search results.

Over the course of a few days (the internet was a smaller place back then), engineers at Google and Six Apart (where I worked at the time) agreed to negate the relevance of the link back to the commenter’s site on a comment and dealt a blow to the comment spam problem. A small group of engineer’s extended the web and, in a very simple way, removed the incentives that rewarded bad behavior.

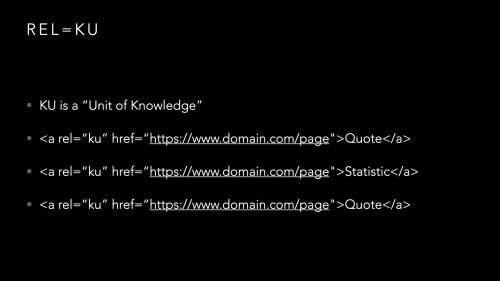

I told this story because I see the rel= link qualifier as something that could be used to markup text and prove provenance. I proposed something called a “knowledge unit” or KU for short.

The syntax of the markup worked alongside HTML, just bracket anything you want to track in the rel=ku markup and, as long as the consuming LLM keeps that markup intact, that text will be tagged as something originating from the url cited in the markup.

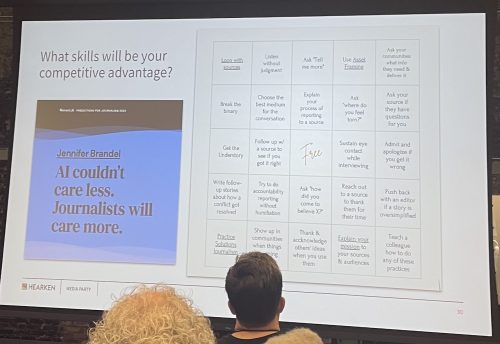

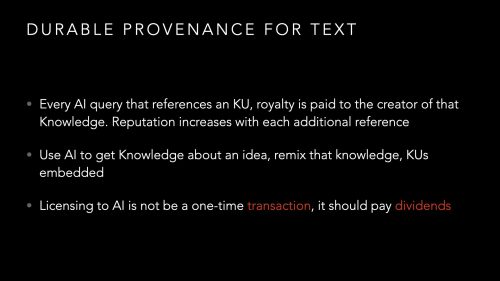

This provenance can be used to track the number of times a particular knowledge unit is mentioned in an LLM’s response. This enables a fundamentally different ecosystem from that of pageviews in that there is no need to constantly re-post something you wrote years ago to keep it fresh, relevant, and trending in Google’s search results. Hard work to produce durable knowledge should pay dividends on into the future.

More akin to the Wikipedia reputation model, a good, unique fact can continue to be cited over time and, in fact, revenues should flow towards durable knowledge units and will hopefully reward those that gather and present unique knowledge rather that the hot takes and re-writes that are rewarded in today’s pageview economy.

Taken a step further, we will then return to a web before ad targeting and enragement metrics to a world where we reward those that teach us something new.

This new internet no longer drives you to “acquire” a “user” to package up and sell to an advertiser. Publishers no longer need to lock their stories behind a paywall to prevent non-monetized access. In this new ecosystem, the incentive is to share knowledge, getting paid directly for the broad distribution and citation of your work.

This is just the germ of an idea that may well be totally naive. While I do like the bottoms-up, simplicity of the markup approach, it requires everyone to adopt and trust each other to collectively make it work.

What is to keep bad actors from hijacking Knowledge Units and claiming something as their own? Page index timestamps will need to be the arbitrator of provenance I suppose but how do you guarantee delivery of your post over others?

Also, why would LLMs adopt such a system that would fundamentally make their indexes more complex and expensive? My hope is that the LLMs eventually see that strip-mining the web is unsustainable. Just as in agriculture, an ecosystem that does not replenish it’s resources, both large and small, is not a diverse, healthy, and long-lasting ecosystem.

If you’ve made it this far, I’m super-interested in your thoughts and encourage you to get in touch.